Human Factors

for RPAS

Why Pilots Make Mistakes & How to Prevent Them

Based on TP15263 Section 3 & Transport Canada Standards

CASARA Training - April 23, 2026 (60-90 min)

Why This Matters

- 75-80% Pilot error = all aviation accidents

This isn't about bad pilots. It's about systems. Humans are predictable in certain ways. - RPAs are no different

Drone pilots face same cognitive limitations. You're looking at a screen, not out a windshield. - Human factors training reduces risk

Not by making you superhuman. By making you aware of your limits. - Your brain is both your best tool and biggest liability

Same brain that got you here can also kill you. Know which mode it's in.

Learning Objectives (TP15263)

By the end of this session, you will be able to:

- Describe visual scanning techniques for collision avoidance

- Explain factors affecting pilot alertness & decision-making

- Identify signs of fatigue, stress, and impairment

- Apply the Swiss Cheese Model to accident analysis

- Recognize automation complacency risks

The Visual System

Vision is our PRIMARY sensory system for flight

Key factors affecting visual performance:

- Visual acuity (20/20 normal, contrast matters)

A drone against grey sky is hard to see - Accommodation & convergence (eye focus adjustments)

Eyes adjust from controller screen to sky (~1-2 seconds) - Light/dark adaptation (takes longer dark→light)

Flying into shadow/cloud = compromised vision - Empty space myopia (focuses at 1m in empty sky)

Solution: Actively focus at distance, use horizon/clouds as reference

Visual Scanning Techniques

Effective collision avoidance scanning:

- Series of regularly spaced eye movements

Methodical beats frantic - Focus for at least 1 second on each block area

Minimum time for brain to register detail - Systematic pattern: outer edge → aircraft → outer edge → aircraft → controller

Fixed pattern prevents missing areas. Pilots with IFR training will be familiar with this - this is your RPAS T-scan. - For VOs: scan in strips, pause at each fixation

- ⚠ Perspective illusion: Distant aircraft appear slower/smaller than reality

That Cessna 2 miles out? It's doing 120mph. Looks like it's crawling.

Orientation & Disorientation

Visual illusions are a leading cause of accidents

Common illusion scenarios:

- Sloping terrain (looks different than it is)

- Lighting intensity changes (clouds, sun position)

- Lack of surface texture (water, snow, empty fields)

No reference points = can't judge altitude or movement - Approach path confusion

Mitigation: Altitude caps, altitude minimums, GPS altitude, collision avoidance cameras

Your eyes lie. Instruments don't. Trust the numbers, verify with eyes.

Body Rhythms & Fatigue

Circadian rhythm = 24-hour cycle

- Controlled by light/dark, meals, activity

Your body has a clock. Fighting it = fighting biology - Disruption = degraded performance

Shift work, jet lag, all-nighters = same impairment as being drunk

Jet lag effects:

- Slowed reaction time 24hr awake = 0.10% BAC

- Decision-making impairment

- Memory issues

- Accepting lower performance standards

Sleep Deprivation

Adults need 7-9 hours sleep

Sleep is restorative and essential for mental performance

Rules (CAR 901.19):

- 12 hours - No flying within 12 hours of consuming alcohol

- No flying while fatigued

- No flying while impaired by medications

Warning signs:

Microsleeps (brief blackout) • Slowed reactions (delayed inputs) • Poor judgment (thinking you're fine)

Medications & Substances

ANY medication can impair performance

- Over-the-counter: antihistamines, analgesics

First-gen antihistamines (Benadryl) = strong sedation - Prescription: check with medical examiner

- Even "safe" drugs can have residual effects

Half-life matters. Took at 10pm? Still in you at 6am - The 5x Half-Life Rule:

Look up medication's half-life → multiply by 5 = wait time - Example: 8hr half-life × 5 = 40 hours

- Long-term use = builds up, may need longer

⚠ Never self-medicate before flying

Alcohol

Depressant of the nervous system

- Slows everything: reflexes, judgment, vision

Depressant of nervous system affects all cognitive functions - Disrupts sleep patterns (hangover effect)

Sleep drunk is still drunk - 12 hours - rule is MINIMUM

Some need 24. Know your body - Removed at fixed rate - can't be sped up

Strong coffee doesn't help. Time is the only cure

A hangover is an effect of consuming alcohol

If you have to ask if it's been long enough, it hasn't

Aviation Psychology

Factors affecting decision-making:

- Stress (acute vs chronic)

Acute = "there's a plane" (focuses). Chronic = "bills, life" (distracts) - Workload management

Too much = overwhelmed. Too little = bored. Both dangerous - Situational awareness

Knowing what's around you, where you are, what's coming - Attitude (overconfidence, complacency)

"I've done this 100 times" = dangerous phrase

The dangerous triad:

Fatigue + Stress + Complacency

All three together = accident waiting to happen

Situational Awareness

The ability to perceive, comprehend, and project

- Perceive

What's around me right now? - Comprehend

What does it mean? - Project

What's going to happen next?

Lost when:

- High workload

- Distractions

- Information overload

- Fatigue

Maintain SA through:

Systematic scanning • Verbal callouts • Crew communication • Cross-checking instruments

Automation Complacency

"The automation did it" is a dangerous mindset

You're still the pilot. The machine is a tool, not a replacement

Risks:

- Skills atrophy (lost manual flying ability)

If automation fails, can you fly manually? - Reduced vigilance ("It's watching, so I don't have to")

- Mode confusion (What mode is it in? What will it do next?)

- Automation surprises ("Why did it do THAT?")

Mitigation:

Know your automation intimately • Stay engaged • Practice manual flying regularly

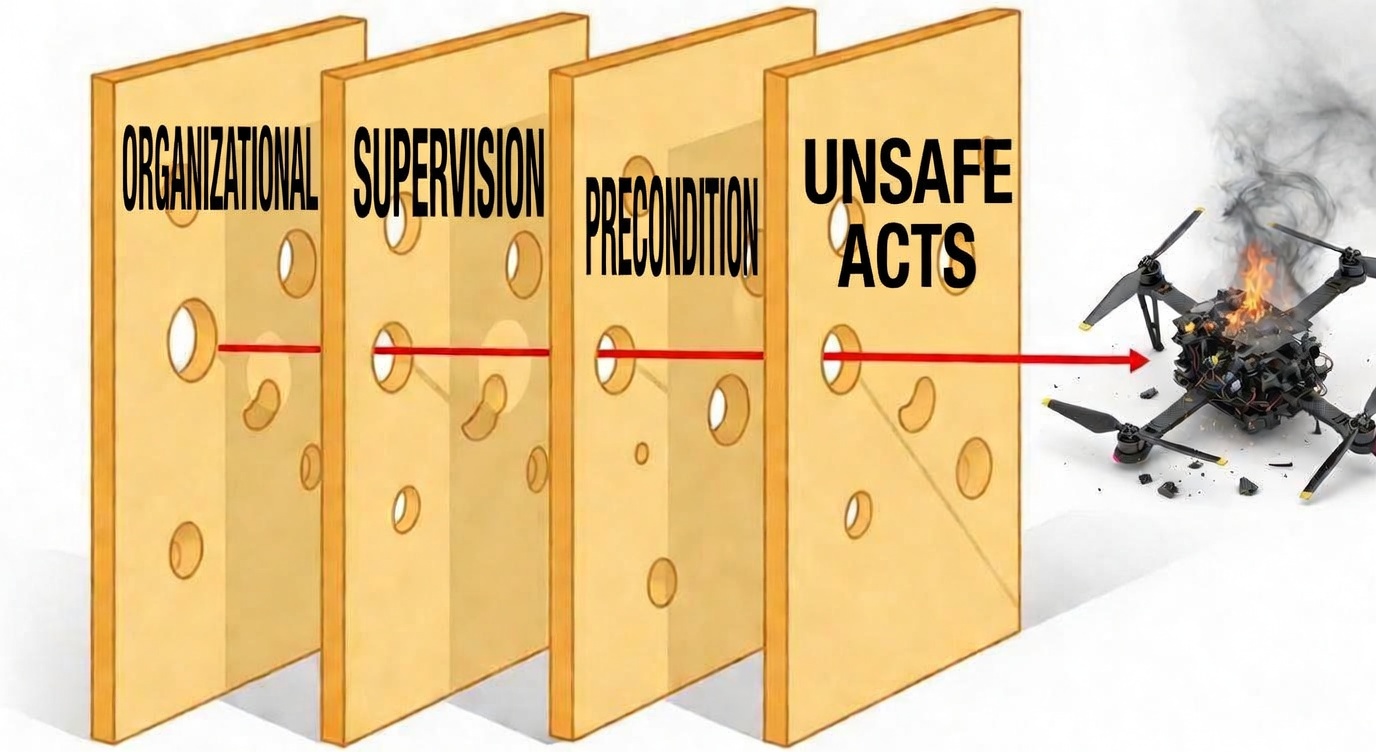

The Swiss Cheese Model

James Reason's accident causation model

Four layers of defense (each layer should stop the accident):

- 1. Organizational - Policies, culture, resources

HQ decisions, training programs, funding - 2. Supervision - Training, mentoring, oversight

Did someone check your work? - 3. Preconditions - Fatigue, equipment, crew state

What's your condition? - 4. Unsafe Acts - Errors, violations, mistakes

The actual mistake

Accident = holes align across all layers

One hole = blocked. Four holes in a line = accident.

Swiss Cheese Model

When holes align across all layers → accident penetrates all defenses

HFACS Framework

Human Factors Analysis & Classification System

Expands Swiss Cheese into:

- Organizational Influences

Management, policies, culture, resource allocation - Unsafe Supervision

Inadequate supervision, planned inappropriate operations - Preconditions for Unsafe Acts

Personal factors (fatigue, stress), environmental factors - Unsafe Acts (errors/violations)

Skill-based errors, decision errors, routine violations

Used by TSB, FAA, Transport Canada

This is the professional standard

Case Study - TSB A21O0069

The Buttonville Collision

Real accident. Real report. Real lessons.

August 10, 2021

- York Regional Police DJI Matrice M210

Professional unit, not hobbyists - Cessna 172 on final to CYKZ

Landing traffic - RPA hovering at 400ft AGL

Below cloud, in approach path - COLLISION - no injuries

Lucky. Could have been fatalities

Why this matters: Same situation could happen in SAR.

You vs manned aircraft. Who wins? Nobody.

Case Analysis - What Happened?

Timeline:

- 1256: RPA begins 2nd flight, hovers at 400ft

"Just a hover" = dangerous assumption - 1257: Cessna joins right downwind Runway 15

Aircraft joining = traffic pattern - 1301: Collision at 1.2NM from threshold

5 minutes later. Lots of time to see it

The pilot was:

- Watching video feed (not airspace)

GCS screen = his back to the sky - Had ADS-B receiver but Cessna had no ADS-B

Technology failed. Doesn't replace looking - No dedicated spotter assigned

Second set of eyes could have seen it

Apply Swiss Cheese

1. ORGANIZATIONAL:

• Police unit requested recon under approach path

• No policy against hover location

2. SUPERVISION:

• No dedicated spotter

• Officer commanding stood nearby watching video

3. PRECONDITIONS:

• RPA pilot watching screen, not airspace

• Cessna no ADS-B (invisible to RPA)

• TV display lacked telemetry

4. UNSAFE ACTS:

• Hovering in active approach path

• Cessna see-and-avoid failed

Discussion

Questions for the class:

- Which layer had the most critical failure?

- What could have prevented this?

- How does this apply to CASARA operations?

Think about your own operations:

• Where are the holes in your cheese?

• What's your "video feed" distraction?

The 12 Core Human Factors

- 1. Fatigue - The killer. Most under-reported

- 2. Stress - "Hurry up" kills

- 3. Complacency - "It'll be fine"

- 4. Lack of Knowledge - Don't know what you don't know

- 5. Distraction - Focused on one thing, miss everything else

- 6. Situational Awareness - Lost SA = lost control

- 7. Communication - "I thought you said..."

- 8. Pressure - Mission completion > safety = wrong

- 9. Norms - "It's always been fine before"

- 10. Lack of Resources - Doing more with less = accident

- 11. Attitude - "It won't happen to me"

- 12. Fitness - Can you actually do this today?

Exercise - Scenario Analysis

Scenario: You're tasked with a search pattern near an airport. The operation commander keeps changing the search area. You're on your 3rd battery, tired, and haven't eaten. The weather is deteriorating.

Apply Swiss Cheese - identify failures in each layer:

- Organizational: No clear search area, changing demands

- Supervision: No one saying "stop, we're done"

- Preconditions: Fatigue (3rd battery), hunger, weather

- Unsafe Acts: Continuing when you should have landed

Key Takeaways

- 1. Pilot error is #1 cause - understand why

It's not about bad pilots. It's about systems - 2. Fatigue kills - know your limits

If you have to ask, you shouldn't fly - 3. Automation is a tool - do not trust blindly

Can fail. Can be misunderstood. You're still pilot - 4. SOPs exist - they are written in blood

Someone died so that rule exists - 5. Swiss Cheese works - use it for analysis

Not just for accidents - use for pre-flight "what could go wrong" - 6. You are the last line of defense

Everyone else can fail. You're there at the end

Resources

- TP15263 Section 3 - Human Factors (Transport Canada)

Your syllabus - CAR 901.19 - Personnel Licensing & Operations

The rules - TSB A21O0069 - Buttonville Collision Report

Read it. It's public. - CASARA Spotters Guide

Your visual scanning reference - HFACS Framework (Scott Shappell)

The academic stuff behind Swiss Cheese

Questions?

- Discussion

- Scenarios

- Real-world applications

Stay safe. Fly smart.